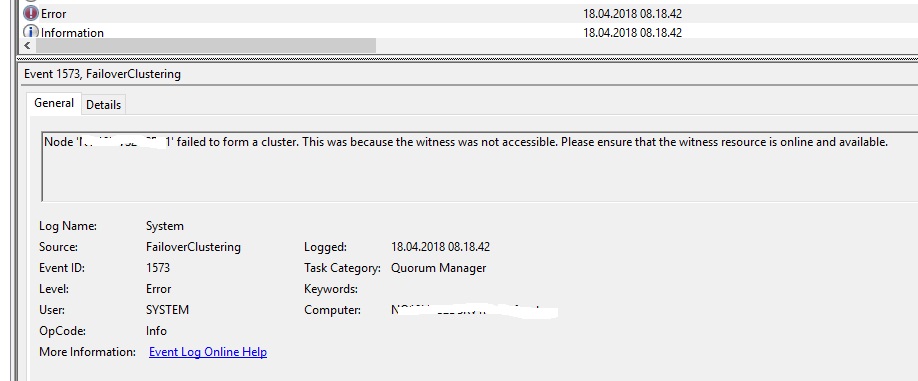

So a client had patched there 2 node S2D cluster this Sunday. When the 2nd node came up it would not join the cluster. So the client started troubleshooting, without realizing that the Quorum file share Witness was not working. Someone had managed to delete the folder where the File Share Witness was on. At first, the first node that was patched and booted was working. But at some point both nodes where booted over and over again.

Thus causing the cluster not to come online again. Several fixes was tried, by trying to force the cluster online. By setting the quorum node vote to only 1 node and so on. Also causing the 2nd node to be evicted from the cluster at one point. The logs kept screaming that there was not a majority vote to bring the cluster online.

Every possible option was tried to bring the cluster online again. Tried setting the cluster Quorum with a cloud Witness with Powershell but that did not work and it failed.

Set-ClusterQuorum -CloudWitness -AccountName <StorageAccountName> -AccessKey <StorageAccountAccessKey>

Even when i ran Get-PhysicalDisk and Get-Virtualdisk it was not showing

So i had 3 options

- Reinstall nodes and wipe the S2D config on the storage pool with the Clear-SdsConfig.ps1 script

- Reinstall nodes and keep the Disk config for the S2D storage pool

- Try and reinstall the cluster role on nodes.

I ended up trying the 3rd option first, as that was the fastest option i had. And i rely did not want to reinstall the nodes.

So what i did was the following.

Start with removing the Clustername from the Domain Controllers. Make sure it’s removed on all Domain Controllers in the Domain.

Then run these Powershell commands.

#Define nodes

$S1 = "NODE1"

$S2 = "NODE2"

$nodes = ($S1,$S2)

#Uninstall Cluster role on nodes

Invoke-Command -ComputerName $nodes -ScriptBlock {

Remove-WindowsFeature Failover-Clustering -Restart

}

#Wait for nodes to come back up

#Install Cluster role on nodes, and the nodes will restart if they need to

Invoke-Command -ComputerName $nodes -ScriptBlock {

Install-WindowsFeature Failover-Clustering -IncludeAllSubFeature -IncludeManagementTools -Restart

}

#Recreate the cluster with the same config

New-Cluster -Name ClusterName -Node $nodes –NoStorage -StaticAddress 10.0.0.1

#Add Fileshare Witnes or Cloud Witnes

#Set-ClusterQuorum -NodeAndFileShareMajority \fileserver\fsw

Set-ClusterQuorum -CloudWitness -AccountName <StorageAccountName> -AccessKey <StorageAccountAccessKey>

#Enable ClusterS2D again

Enable-CluserS2D

You will get some warnings when creating the cluster and enabling S2D again. But these are ok.

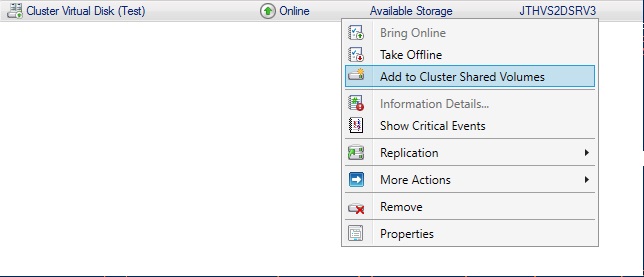

Once this is done, your Failover Cluster Manager will be empty. There is no VM’s, no StoragePool and no Cluster Shared Volumes. Go into Pools under Storage, and click on Add Storge Pool and choose your S2D pool. Once that’s done, go into the Disks and click on Add Disk. Then choose the disks and add them as Cluster Shared Volume

You can do this with GUI or powershell

Get-ClusterAvailableDisk | Add-ClusterDisk

Get-ClusterResource | Where-Object {$_.OwnerGroup –EQ 'Available Storage'} | Add-clustersharedvolume

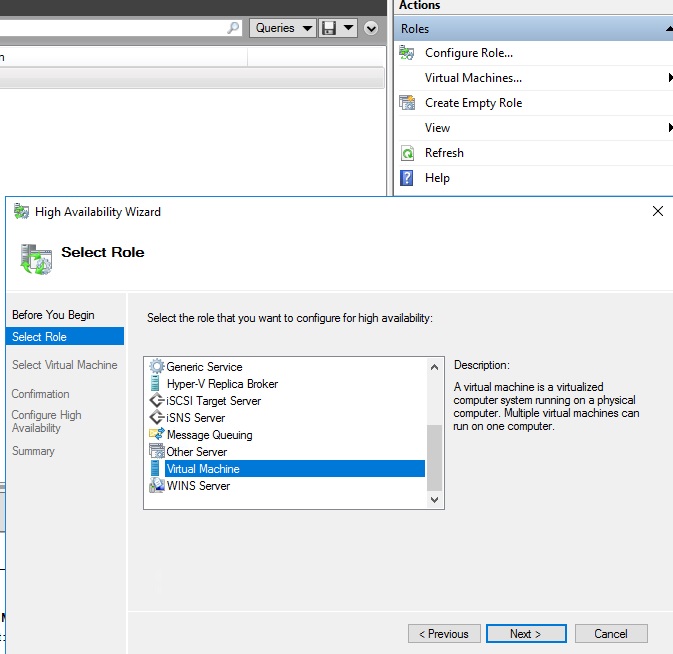

Now that the volumes are back into the cluster. And you can access the drives under c:\clusterstorage\ we need to add the VM’s back to cluster. First we go into Hyper-V manager and import the VM configuration for each VM.

Once the VM is in Hyper-V manager we also need to add it as a cluster role in Failover Cluster Manager. Go to FCM and add the VM’s as Virtual Machine Roles.

Now your VM’s should be added as a Cluster Role and you can start them up.

Update 19.04.2018

VMM

After getting everything up again. A Live Migration within VMM was tried and failed. It was complaining about

Error (12711)

VMM cannot complete the WMI operation on the server () because of an error: [MSCluster_ResourceGroup.Name="bd32d3bf-6cbc-47fc-98b2-796d2f98f998"] The cluster group could not be found.

This was due to when importing the VM’s to Hyper-V manager it’s not setup with HA and VMM does not pickup that the VM was added to the Failover Cluster Manager. So do a manual refresh on each Virtual Machine in VMM and Live Migration should start to work.

DPM other Backup solutions

In DPM if the machines is deployed with VMM the name of the VM’s in DPM starts with SCVMM, when importing the VM’s in Hyper-V manager they will not have SCVMM in the name anymore in Failover Cluster Manager. Thus the old backup set’s will not find the VM’s. Remove the backups, don’t delete the backup data. And setup the protection on the VM’s again. It will trigger a synchronization of the machines.

2 thoughts on “How to bring your 2 node S2D cluster back up when witnes share is gone”

Hi JT – Great posts, thanks for sharing your experience – I have been researching S2D for a deployment we have coming up and came across a concerning sentence in the online doco when looking in to the cluster resiliance (quorom). I wonder if ou can tell me what you make of it.

Understanding the cluster and pool quorom – specificaly 5 nodes and beyond

Concerning statement

“Storage Spaces Direct cannot handle more than two nodes down anyway, so at this point, no witness is needed or useful”

our deployment will be 6 nodes (3 x 3) – 3 nodes in one data hall and 3 nodes in another – each hall to be a failure domain. Would the above mean our cluster would stop if we lost one failure domain?

thanks in advance.

Hello

Always use a quorum with S2D. It’s the old way of thinking that one does not need it if one have more then 3 nodes. But always have it configured. Fileshare or cloud witnes. And with only 3 nodes you can have 1 down. So you would wan’t a quorum witnes at that point.

If you need any help let me know.

JT