4 weeks ago i started my new job at Spirhed here in Norway. Where i will be focusing on S2D, VMM, OMS, Azure Stack and other datacenter solutions. As we are deploying S2D with VMM we wanted to build an easy to use but robust way of configuring VMM and deploy the physical hosts for S2D and configure them all the way til we create the cluster and enable S2D. Deploying the host require BMC deep discovery of the host. It detects everything from disks, controller, network cards and so on. In my home lab i have Mellanox CX3 cards and are using them for host mgmt and SMB, so i am using a setSwitch with this. And configured this in the script.

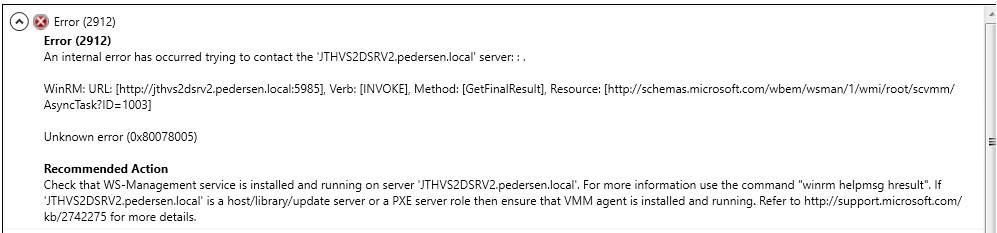

Now while deploying the job would fail with a WinRM error message

Error (2912)

An internal error has occurred trying to contact the ‘JTHVS2DSRV2.pedersen.local’ Server.

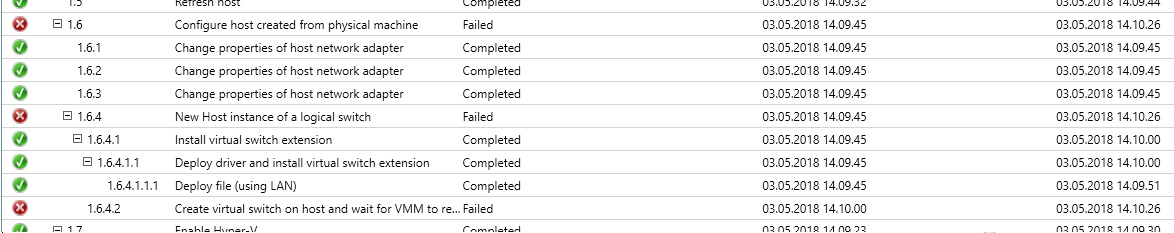

It would fail on the deployment of the LogicalSwitch with the 2 Mellanox cards.

Now i tried to manually add the LogicalSwitch with both pNic’s but it failed again with the same error message. If i deployed it with just 1 pNic everything was ok. So what i ended up with is removing the line with the 2nd pNic in the script to BMC the host. Then add the 2nd pNic in after the physical host deployment was done.

This solved everything and the deployment could succeed

A bug report is created with the VMM team. Hoping to figure out why this is not working soon

2 thoughts on “Unable to Bare Metal Deploy new S2D host from VMM with both physical Mellanox nic”

Read you posts and am hoping you might be able to help. We have a 6 node 2016 S2D cluster using Mellenox NIC’s and SET (Switch Embedded Teaming). We are not seeing RDMA flow over the SMB network we defined.

Do you do consulting? Do you know someone that could help us with the config?

Contacted Patrick and is advising.

JT