Last year I wrote that IT/OT convergence is a control plane problem, not a network problem. Two months on, I want to put down what we have actually learned by doing the work, across active Azure Local deployments running at OT network level. This post is what I would say to a customer asking me, today, whether Microsoft is the right platform for their OT. The short answer is yes. The longer answer is what this post is about.

A short recap

In the previous post I argued that the hard problems in IT/OT are not about getting traffic across the iDMZ. They are about who decides. Who owns the policy that lands on a workload at L3, who owns the identity that activates a privileged role, who sees the security incident first. The Microsoft platform gives you the technical fabric to answer those questions. It does not answer them for you.

What I had not written about explicitly was the friction that shows up when you actually do the work.

Three things I keep seeing

Three things keep coming back across the engagements I run with industrial customers:

- Customers want to standardise on Microsoft for OT. Not Linux, not VMware, not a niche industrial vendor. Microsoft.

- The friction they hit during deployment is real, repeatable, and fixable.

- None of it is in the documentation as design guidance for OT.

That last point is the one I want to dwell on. The platform exists. The friction is solvable. The guidance is the gap.

Three cases, one pattern

Three active engagements I am working with right now, anonymised but representative of what mid to large industrial customers are running into.

Heavy industry, Nordics. Azure Local at L3 in a shared IT/OT tenant, AVD jumphosts for engineering access, Active Directory in front, Defender for Endpoint on Windows nodes. The deployment works. The customer formally accepted the residual risk in their ISMS after IT-pushed Conditional Access policies caused unexpected lockouts of OT operations staff. They are operational and productive.

Critical infrastructure, Nordics. Two-site Azure Local, dedicated OT tenant, two Sentinel workspaces with Lighthouse to a central SOC. Implementation is underway. URL allowlist negotiations with the OT security team are ongoing. The architecture is the right shape, the work is mechanical from here.

Transport sector operator. Evaluated the full Microsoft management stack for L3, declined. Their security team’s air-gap principle could not be reconciled with the persistent outbound connectivity that Azure Local management requires. Microsoft was not selected for that layer.

Two out of three is not a bad ratio. The third one tells you something useful.

The honest list of friction

Across these three cases (and a fourth one I am scoping now), the same six things keep showing up:

1. The URL surface. Azure Local plus Arc plus AKS in West Europe needs more than 80 outbound FQDNs at minimum, before you add AVD, Intune, or Defender. Arc Gateway helps for AKS traffic but does not cover authentication, CRL, or Arc registration paths.

2. HTTPS inspection. Microsoft’s published guidance is that HTTPS inspection must be disabled on the Azure Local network path. OT iDMZ design typically mandates deep packet inspection. These two requirements pull in opposite directions.

3. Shared-tenant blast radius. In a shared IT/OT tenant, an IT admin’s policy push can land on OT workloads. RBAC and process discipline can mitigate this; technical separation cannot, today.

4. Restricted Management Units (RMU). RMUs cover users, devices, and security groups. They do not cover Conditional Access policies, authentication methods, PIM, Access Reviews, or Identity Protection. An OT operations team in a shared tenant cannot self-manage MFA resets or scoped lockouts.

5. Intune profile fit. Patch windows, restart policies, and EDR tuning in Intune are designed for enterprise endpoints. SCADA nodes, HMIs, and engineering stations are not enterprise endpoints. Tuning is possible, but it is per-customer engineering work.

6. Cloud-dependent control plane. Azure Local’s cluster management requires Entra ID and cloud reachability. Local AD covers workloads. There is no offline break-glass path for the cluster control plane itself.

None of these are showstoppers if you go in with eyes open. All of them show up.

What works today, the dedicated OT tenant

The pattern that has emerged across our active engagements is a dedicated OT tenant, separate from the IT tenant, with its own Sentinel workspace, its own Defender stack, and Azure Lighthouse used for read-only oversight from a central SOC where one exists. PAW with FIDO2 plus an AVD jumpstation as the primary access path. Conditional Access policies authored once, by one team, for OT.

It works. It is not officially blessed by Microsoft as a reference architecture, but it works, and we have customers running it in production.

The dedicated OT tenant is now the default I propose to customers when they have OT workloads at scale.

The opportunity I see for Microsoft

This is the part I want to dwell on, because it is genuinely optimistic and I think it is the most useful frame for the customers I work with.

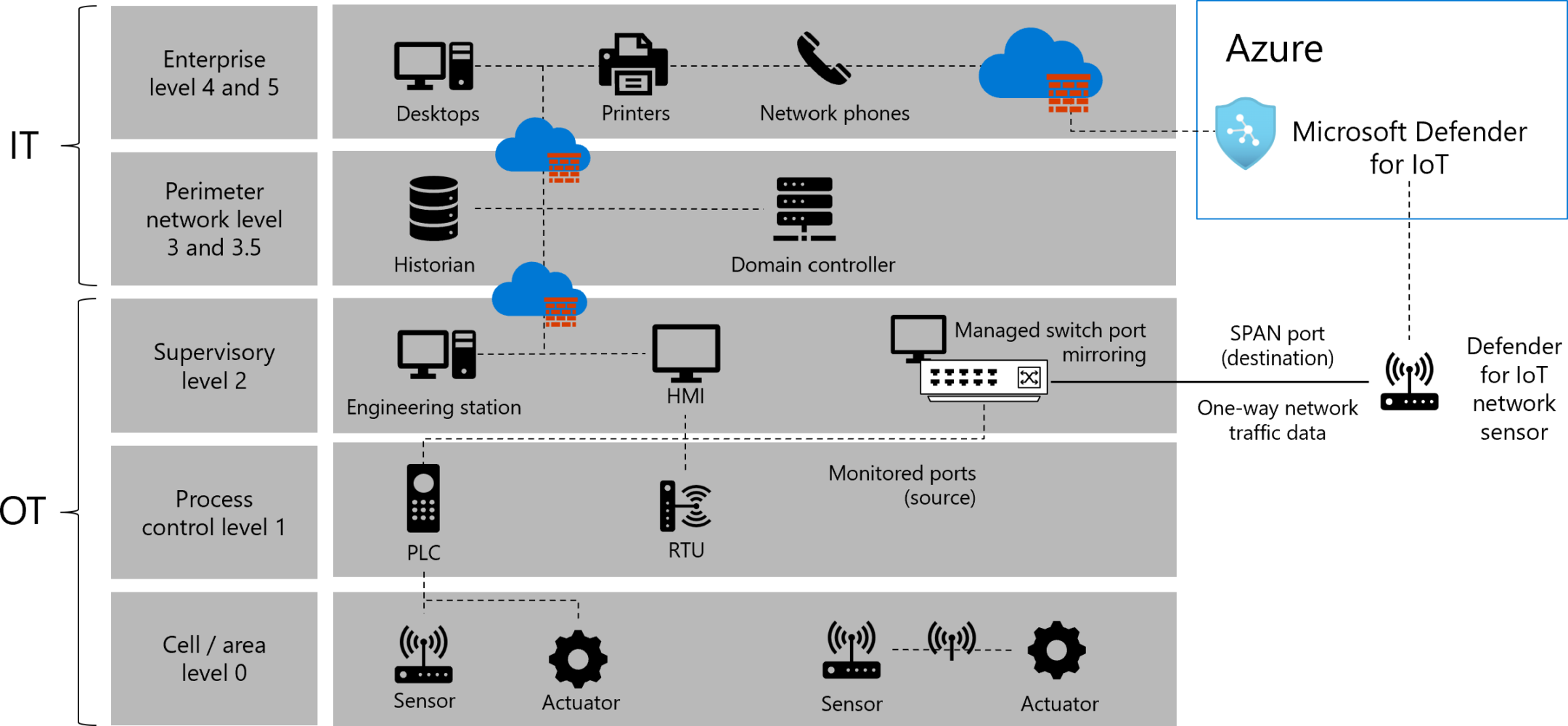

Microsoft has been the only major cloud provider seriously investing in operational technology as a first-class scenario. Defender for IoT is real and improving. Azure Arc made the L3 control plane question answerable. Azure Local gives you on-premises compute that participates in Azure governance without leaving the building. Sentinel handles OT signal alongside IT. The strategic intent is clear from the publicly visible product trajectory.

What I would like to see, as someone working on these deployments daily, is the OT-specific guidance, the kind of structured playbook that Microsoft has published for so many other scenarios:

- A Cloud Adoption Framework chapter for OT

- An MCRA pattern for OT multi-tenant

- A cost and licensing model for the dedicated OT tenant

- An Arc Gateway extension that covers the full Azure Local outbound surface

- An offline MFA path for L3 workloads

- An “OT mode” baseline for Intune and Defender for Endpoint with process-safe defaults

Each of those is a tractable engineering investment. None require Microsoft to invent something new. They require taking what already works in the field, written down by partners and customers, and turning it into officially supported guidance that compliance teams can anchor to.

I am watching the public roadmap with optimism.

Pragmatism, not air-gap religion

The third customer case is worth coming back to. Their security team rejected Azure Local for L3 because it requires persistent outbound connectivity. The decision was final and, in their context, correct.

But it was correct because of THEIR risk model, not because air-gap is universally correct for OT. Most industrial customers I work with are not in that category. They run Windows in OT today, they patch over the iDMZ today, they have VPN paths into engineering networks today. They are not air-gapped, they have controlled connectivity. The right question is not whether there is an internet dependency at L3, because the answer is yes whether they use Azure Local or anything else. The right question is whether the connectivity is bounded, audited, and inspectable, and whether the operational benefit justifies it.

When customers ask me whether Azure Local is a good fit, that is the conversation I have. Not “can we get to zero internet”. “Can we get to controlled, documented, justifiable connectivity that supports your real operational requirements?”

That conversation usually goes well.

Why I am still betting on Microsoft for OT

Three reasons.

The platform is converging on a coherent OT story across Azure Local, Arc, Defender for IoT, and Sentinel. The capability exists; the guidance is catching up.

The investment trajectory is visible from the outside. New Defender for IoT capabilities, Arc Gateway maturing, Azure Local OT-relevant features landing in successive releases. That is not how a stagnating platform behaves.

The alternative is not better. The other major hyperscalers do not have on-premises compute that participates in their cloud governance the way Azure Local does. Linux-based OT stacks are mature but fragmented. The Microsoft path, friction included, is the most coherent route to a unified IT and OT operating model.

Part of what I do this year is about helping write that story down: through customer projects, blog posts like this one, and conversations with peers in the community.

If you are running Azure Local at OT network level, or considering it, I would like to hear from you. The pattern is becoming clearer with every engagement, and the more field signal we can pool, the faster the official guidance follows.